'ENTERPRISE AI GOVERNANCE · PILLAR 2 OF 3

Home > Enterprise AI Solutions > Enterprise AI Governance Framework

Enterprise AI Governance and Compliance: Build Your AI Centre of Excellence

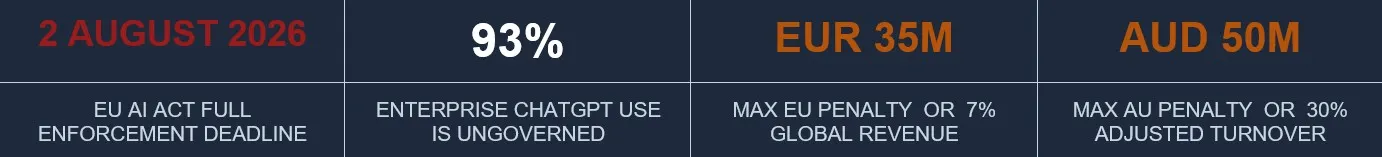

Three major regulatory frameworks converge in 2026. The organisations that govern AI responsibly before the deadline will be faster, more trusted, and more competitive than those that are still preparing when enforcement begins.

The Shadow AI Problem: Why 93% of Enterprise AI Is Already Out of Control

Before your organisation deploys its first officially sanctioned AI system, the AI deployment has already begun. Research consistently shows that 93% of enterprise ChatGPT use occurs through personal, non-corporate accounts — employees accessing public AI tools with work data, work prompts, and work context, completely outside any organisational governance framework. The AI programme your organisation is planning is not the first AI programme running in your organisation.

This is the Shadow AI problem. It is not a policy failure — it is a governance design failure. Employees are not ignoring AI policy because they are reckless. They are ignoring it because the official AI programme is too slow, too restrictive, or too opaque to meet the need they have right now. Shadow AI is the signal that demand exists and supply is failing.

Research signal: 93% of enterprise ChatGPT use is through personal accounts. 88% of employees use AI tools daily. Only 5% use them at an advanced level. The gap between activity and proficiency is a governance and change management problem, not a technology problem.

What Shadow AI Actually Signals — and Why Banning It Makes It Worse

Organisations that respond to Shadow AI with prohibition accelerate the problem. Employees do not stop using AI when it is banned — they become better at hiding it. Every banned tool creates an incentive for employees to find workarounds, use personal devices, and avoid disclosing AI involvement in their work outputs. The prohibition strategy creates the worst possible governance outcome: ungoverned AI that the organisation cannot see, monitor, or remediate.

The governance response to Shadow AI is not restriction — it is competition. An official AI programme that is faster, more capable, and more useful than the shadow alternatives will win the adoption battle without prohibition. This requires a governance architecture designed for speed and utility, not just compliance and control.

Governance Is Not a Brake Pedal — It Is a GPS

The most damaging misconception in enterprise AI governance is that rigorous governance slows AI deployment. The evidence contradicts this entirely. Organisations with mature AI governance frameworks consistently deploy AI faster, close enterprise deals more readily, and attract better AI talent than those that treat governance as a compliance obligation to be minimised. Governance tells you the fastest safe route — it does not decide whether you can move at all.

Three Ways Mature Governance Frameworks Accelerate AI Deployment

Pre-Cleared Pathways

Enterprise Deal Velocity

AI Talent Acquisition

When the AI Governance Committee has classified a category of use cases as low-risk and pre-approved, any new use case in that category can deploy without an individual review cycle. A strong governance framework creates standing approvals, not perpetual review queues. Every governance investment in pre-clearing a category pays dividends on every future deployment in that category.

Enterprise procurement teams now include vendor risk analysts who evaluate AI governance as a contract qualification threshold. A documented, audited AI governance framework closes deals that competitors without one will lose. Enterprise procurement is asking: 'Show us your AI governance policy, your risk classification framework, and your data handling standards.' Organisations that can answer this question in writing, with evidence, win the deal.

Senior AI professionals at the strategy and architecture level increasingly evaluate employers on the quality and seriousness of their AI ethics and governance posture. Organisations that treat governance as a genuine strategic priority attract better AI talent than those that treat it as a compliance burden. The quality of your governance architecture is a talent acquisition signal.

The 2026 Regulatory Landscape: Three Frameworks, One Deadline Window

2026 is the year AI governance transitions from aspiration to enforcement. Three major regulatory frameworks converge within a single calendar year — creating both risk exposure for unprepared organisations and competitive advantage for those with documented governance architectures in place. The highest-common-denominator strategy — designing to EU AI Act standards as the primary benchmark — largely satisfies Australian and SOC 2 obligations with minimal additional effort.

EU AI ACT

AU PRIVACY ACT

US SOC 2

ISO 42001

Reg. EU 2024/1689

APP 1.7–1.9

AICPA TSC

ISO/IEC 42001:2023

2 AUGUST 2026 Full enforcement

DECEMBER 2026 APP 1.7 in force

CONTINUOUS No fixed deadline

EMERGING Fast becoming standard

Penalty: EUR 35M or 7% global revenue Four risk tiers. High-risk (Annex III) requires Annex IV technical documentation, human oversight design, bias testing, and conformity assessment.

Penalty: AUD 50M or 30% adjusted Australian turnover APP 1.7: Disclose automated decision-making that materially affects individuals. APP 1.8-1.9: Right to explanation and human review.

No financial penalty — commercial consequence Trust Service Criteria: Security, Availability, Processing Integrity, Confidentiality, Privacy. Type II report required covering all AI workloads.

Voluntary standard — fast becoming procurement requirement The AI management system standard. Aligns with EU AI Act governance requirements. Certification emerging as enterprise procurement expectation.

EU AI Act (Regulation EU 2024/1689) — Full Enforcement: 2 August 2026

The EU AI Act applies a risk-based classification model. The higher the potential harm an AI system poses, the more stringent the obligations on its developers and deployers. Adopted in May 2024, it entered into force in August 2024 with a phased implementation calendar culminating in full Annex III high-risk enforcement on 2 August 2026. Prohibited practices have been banned since February 2025. High-risk system operators must now complete Annex IV technical documentation packages, implement human oversight with documented override capabilities, conduct bias testing against protected attributes, and register systems in the EU AI database.

The Four EU AI Act Risk Tiers

UNACCEPTABLE RISK (Prohibited since February 2025): AI systems that manipulate human behaviour, exploit vulnerabilities of specific groups, enable social scoring, or conduct real-time biometric surveillance in public spaces. Immediate programme termination. Fines: EUR 35M or 7% global revenue.

HIGH RISK (Full enforcement 2 August 2026): AI used in employment and recruitment, credit scoring, educational assessment, healthcare, law enforcement, critical infrastructure, and migration decisions. Requires Annex IV technical documentation, human oversight design, bias testing, and conformity assessment before any production deployment.

LIMITED RISK (Disclosure obligation): AI systems that interact directly with users — chatbots, recommendation engines, AI-generated content tools. Disclosure to users that they are interacting with an AI system is required. Relatively minimal governance overhead compared to high-risk obligations.

MINIMAL RISK (Voluntary code of conduct): Standard business AI tools — spam filters, AI-assisted search, document processing, basic automation. No mandatory obligations. Voluntary good practice code of conduct encouraged.

Australian Privacy Act — APP 1.7 Automated Decision-Making: December 2026

Australia's AI governance obligations flow primarily through the Privacy Act 1988 (Cth). The December 2026 deadline activates APP 1.7 disclosure obligations for automated decision-making that uses personal information and materially affects individuals. This covers credit decisions, employment screening, customer segmentation, and any AI system that determines access to services. APP 1.8-1.9 creates rights to explanation and human review. Organisations must update privacy policies, establish explainability documentation, and create individual rights response processes before the deadline. Australia has no standalone AI Act — governance must bridge Privacy Act obligations, ACCC consumer law requirements, and the voluntary AI Ethics Principles framework.

US SOC 2 Type II — Continuous AI Controls Obligation

SOC 2 Type II audit scrutiny of AI systems has intensified significantly in 2026 to require continuous, evidence-based control demonstration across five Trust Service Criteria: Security, Availability, Processing Integrity, Confidentiality, and Privacy. AI-specific controls now required include: model drift monitoring with documented thresholds, output accuracy logging against baseline benchmarks, access controls for AI system modification, and a documented incident response plan with tested restoration procedures. The commercial consequence of SOC 2 non-compliance is contract termination and enterprise deal loss — no regulatory fine, but equivalent commercial impact for organisations selling into enterprise markets.

ISO/IEC 42001:2023 — The Emerging Commercial Standard

ISO 42001 is the international standard for AI management systems. While currently voluntary, it is fast becoming a commercial expectation in enterprise procurement, particularly in regulated industries and EU-facing contracts. Its governance requirements align closely with the EU AI Act framework — organisations designing to EU AI Act standards gain significant ISO 42001 alignment as a side effect. Certification is emerging as a procurement qualification threshold in the same way ISO 27001 became table stakes for enterprise cybersecurity vendors over the preceding decade.

The Four-Layer Enterprise AI Governance Architecture

A cross-border AI governance architecture that satisfies the EU AI Act, Australian Privacy Act, and SOC 2 simultaneously requires four interdependent operating layers. Each layer builds on the one below it. Weaknesses in any layer cascade upward into compliance failure, deployment delay, or strategic misalignment. The architecture is designed to be scalable — operating the same model whether the AI portfolio contains 5 use cases or 50.

LAYER 1 — STRATEGIC · The AI Governance Committee

The AI Governance Committee is the senior leadership body responsible for AI strategy, risk appetite, portfolio approval, and board accountability. It meets quarterly with membership comprising: CAIO (or AI Strategy Lead), CRO, CPO, CISO, General Counsel, and CFO. Its decision rights cover all high-risk AI systems, any AI use case with regulatory classification implications, and the annual AI governance report to the board. Layer 1 sets the risk appetite that determines what Layer 2 can approve without escalation.

Primary regulatory alignment: All three frameworks (EU AI Act Article 9 QMS, AU Privacy Act governance obligations, SOC 2 management oversight)

Layer 2 — Operational: The AI Centre of Excellence

The AI Centre of Excellence (CoE) is the operational body that owns governance standards, shared infrastructure, the tool approval register, Kill/Scale gates, and EU AI Act technical documentation for every production system. It is the compliance engine that translates Layer 1 risk appetite into operational controls. Every new AI use case passes through the CoE before deployment. The CoE does not own all AI — it owns the standards, the infrastructure, and the audit trail that allow business functions to build and operate AI safely.

Primary regulatory alignment: EU AI Act Annex IV technical documentation; SOC 2 Processing Integrity and Privacy TSC; governance lead responsible for AU Privacy Act APP 1.7 classification

Layer 3 — Product and Delivery: AI Product Managers

Named AI Product Managers own each production use case from approval through deployment to operational handover. They complete the Success Contract, maintain technical documentation, validate business impact against the original ROI case, and manage the handover to business function operational ownership at Phase 4. Every production AI system has a named AI Product Manager accountable for its performance, compliance, and remediation pathway in the event of a Tier 1 or Tier 2 incident.

Primary regulatory alignment: EU AI Act named operator accountability; AU Privacy Act individual rights response process; SOC 2 ownership and change management controls

Layer 4 — Embedded Function: AI Champions and Business Unit Owners

AI Champions (1 per 20-25 staff) are the peer-level adoption and governance support infrastructure. They are not a hire — they are developed from the existing workforce through the Tier 3 skills certification programme. Champions identify Shadow AI instances in their function and escalate to the CoE, monitor compliance at the operational level, and provide real-time feedback on governance friction. Business Unit AI Owners have operational accountability for all AI systems running within their function, including the obligation to report incidents to the CoE within the defined response timeframe.

Primary regulatory alignment: EU AI Act human oversight requirements at the operational level; AU Privacy Act individual rights management; SOC 2 operational controls monitoring

AI Risk Classification: The Decision Tool Before Every Deployment

Every AI use case must be classified before it is approved for pilot. Classification determines the governance overhead required, the documentation standard needed, the human oversight design, and the regulatory notification obligations. Classification happens at four tiers — derived from the EU AI Act framework as the highest-common-denominator standard.

Risk Tier

Definition

EU AI Act Alignment

Governance Requirement

Examples

UNACCEPTABLE (Prohibited)

AI that manipulates behaviour, exploits vulnerabilities, enables social scoring, or conducts public biometric surveillance

Prohibited since February 2025. EUR 35M or 7% revenue penalty.

Immediate programme termination. No exceptions. Legal review required.

Social scoring. Subliminal manipulation. Public real-time biometrics.

HIGH RISK

(Annex III)

AI in employment, credit, critical infrastructure, education, healthcare, law enforcement, migration

Full enforcement 2 August 2026. Annex IV technical documentation required.

Conformity assessment, bias testing, human oversight design, EU AI database registration, named operator.

CV screening AI. Credit scoring. Medical diagnosis. Employee monitoring.

LIMITED RISK (Disclosure)

AI that interacts with users or generates content without user awareness

Disclosure obligation: users must know they are interacting with AI

Transparency notice. AI disclosure in all user-facing touchpoints. Privacy policy update.

Customer chatbots. AI-written content. Recommendation engines.

MINIMAL RISK (Voluntary)

Standard AI tools with no significant harm potential and no regulated decision-making

No mandatory obligations. Voluntary good practice code of conduct.

Internal use policy. Basic logging. Standard IT access controls.

Spam filters. Document OCR. AI-assisted search. Basic automation.

How to Apply the Risk Classification Framework to Your AI Inventory

Apply the framework to every AI system in your current inventory and every proposed use case before pilot approval. Step 1: Conduct an enterprise-wide AI inventory audit — all deployed and in-development AI systems. Step 2: Classify each system against the four tiers using the EU AI Act as the primary standard. Step 3: Apply the Australian APP 1.7 trigger test to all systems handling personal information of Australian individuals. Step 4: Assess SOC 2 Trust Services Criteria exposure. Step 5: Document classification, rationale, governance requirements, and named accountable person for every system.

Shadow AI Containment: From Prohibition to Governed Migration

Shadow AI containment is not a technology problem — it is a governance response problem. The most effective containment strategy does not start with prohibition; it starts with discovery. Organisations cannot govern what they cannot see. The first step is building a complete picture of what AI is actually running in the organisation before designing the response.

The goal of the containment programme is not to eliminate employee AI use. It is to migrate high-risk ungoverned AI use into governed, official channels — and to do so by making the official channels faster, more capable, and more useful than the shadow alternatives. The containment programme succeeds when employees choose the official AI programme, not because they are forced to, but because it is better.

The Five-Step Shadow AI Containment Playbook

STEP 1 — DISCOVERY:

Conduct a confidential Shadow AI audit across all business functions. Survey employees directly, review IT access logs for AI domain traffic, and assess Shadow AI IP and regulatory risk tier for each identified tool and use pattern. Classify findings: Low (personal productivity tools with no work data), Medium (work data in ungoverned tools), High (regulated data or personal information in ungoverned tools).

STEP 2 — RISK TRIAGE:

For each High-risk Shadow AI instance, quantify the actual exposure: IP leakage risk, regulated data breach risk, EU AI Act compliance gap, AU Privacy Act APP 1.7 trigger assessment. Do not attempt to address all Shadow AI simultaneously — prioritise by risk tier and remediate High-risk instances first within 30 days.

STEP 3 — GOVERNED ALTERNATIVES:

For each major Shadow AI use pattern identified, develop or fast-track an official governed alternative. If employees are using ChatGPT for contract drafting, build a governed, organisation-specific contract drafting tool within the CoE infrastructure. Make the official version measurably better — faster, more accurate, more domain-specific, better integrated.

STEP 4 — TRANSPARENT MIGRATION:

Communicate the discovery findings to the workforce without blame attribution. Frame the migration to official tools as an improvement, not a restriction. Publish the AI acceptable use policy as a clear, practical guide — not a legal document designed to minimise liability. Give employees a specific timeline for migrating each shadow tool.

STEP 5 — ONGOING MONITORING:

Establish a quarterly Shadow AI re-audit as a standing CoE activity. Shadow AI is not a one-time problem — it recurs as new tools emerge and as employee AI sophistication grows. The CoE maintains a live approved tool register and a fast-track approval pathway for new tools identified by Champions, so that legitimate demand can be met quickly through official channels rather than driving new Shadow AI adoption.

Risk escalation note: Any Shadow AI instance involving regulated personal information, health data, financial data, or EU market data should be treated as a potential AU Privacy Act or EU AI Act compliance incident and assessed by your Governance and Compliance Lead within 48 hours of identification.

The AI Centre of Excellence: Your Governance Infrastructure

The AI Centre of Excellence is not a team — it is an organisational capability. It is the infrastructure that makes every subsequent use case cheaper, faster, and safer to deploy than the one before it. Without a CoE, organisations produce scattered pilots, bespoke investments that cannot be shared, and vendor relationships that no one manages centrally. Shell's AI CoE began as a coordination body and evolved into a deep expertise centre. In 2013: 30 AI practitioners. Today: 4,000. The lesson: the CoE does not own all AI — it owns the standards, the shared infrastructure, and the governance that allows all business functions to build AI with confidence.

The Six Core CoE Roles and Their Accountability Boundaries

Role

Primary Accountability

Phase

CoE DIRECTOR / CAIO:

Enterprise AI strategy, board reporting, governance posture, CoE leadership, and programme direction.

Active across all phases. Owns the Annual AI Report at Phase 5 and the Governance Committee relationship.

AI ARCHITECTS (x2):

Technical AI system design, platform architecture, model selection, integration design.

Phases 2-5. Owns the Technology Stack Decision at Phase 2 and scale infrastructure design at Phase 4.

DATA ENGINEERS (x2):

AI-ready data pipelines, data quality assurance, governance enforcement, ETL/ELT for AI workloads.

Phases 2-5. Owns the Data Readiness Assessment at Phase 2 and the shared pipeline foundation at Phase 4.

GOVERNANCE AND COMPLIANCE LEAD:

EU AI Act compliance, AU Privacy Act, SOC 2 controls, audit trail management, regulatory horizon scanning.

Phase 2 onwards. Owns Kill/Scale Gate 4 (Compliance Gate) for every production deployment.

AI PRODUCT MANAGERS (by portfolio):

Use case ownership, Success Contract management, technical documentation, business impact validation, handover to operational ownership.

Phase 3 onwards. One named PM per production use case.

CHANGE MANAGEMENT LEAD:

Workforce transformation programme, Champion Network management, 3-tier skills certification delivery, Shadow AI migration communications.

Phase 2 onwards. Owns AI adoption rate as primary metric.

CoE Evolution: Centralised to Federated in 36 Months

CENTRALISED (Phases 1-2):

The CoE owns all AI programme activity, maintains all governance, controls all access and approvals. This is correct at programme inception — the organisation needs tight central control while governance infrastructure is being established.

HUB AND SPOKE (Phases 3-4):

The CoE maintains standards, shared infrastructure, and governance. Business functions own delivery within those standards. Champions are the spoke connections. Speed-to-production accelerates from 60 days to 30 days as shared foundations mature.

FEDERATED (Phase 5):

Business functions operate with full autonomy within the CoE governance framework. The CoE evolves from a delivery body to a standards and excellence body. Speed-to-production target: under 21 days. AI adoption rate target: 80% of eligible workforce.

Read: From Chaos to Control — Structuring Your First AI CoE

Governance as Competitive Advantage:

Three Commercial Mechanisms

The counterintuitive commercial truth about mature AI governance is that it creates speed, not friction. The organisations consistently winning enterprise AI deals, attracting top AI talent, and deploying use cases at the highest velocity in 2026 are not the organisations with the lightest governance footprint — they are the organisations with the most mature governance architecture.

Pre-Cleared Deployment Pathways

When the AI Governance Committee has classified a category of use cases as low-risk and pre-approved — content generation tools for internal use, document summarisation within approved data boundaries, standard customer service chatbots with disclosure in place — product teams deploy within that category without individual review cycles. Every classification decision compounds. The governance investment in the first use case pre-clears the next twenty in the same category. Well-designed governance becomes a deployment accelerator, not a review queue.

Enterprise Deal Velocity

Enterprise procurement teams routinely include AI governance as a vendor qualification threshold. Requests for Proposals now include AI governance questions as standard: What is your AI policy? How do you classify AI risk? What is your data handling standard for AI workloads? What are your human oversight protocols? Organisations with documented, audited answers close deals that competitors without them lose. The AI governance framework is a revenue asset, not a compliance cost.

AI Talent Acquisition

Senior AI professionals at the strategy, architecture, and governance level now evaluate employers on the seriousness of their AI ethics and governance posture. A CAIO, AI Architect, or AI Governance Lead who has spent years building responsible AI programmes will not accept a position at an organisation that treats governance as a box-checking exercise. The quality of your governance framework is a talent acquisition signal that differentiates you from competitors offering equivalent compensation but treating AI ethics as a compliance afterthought.

"I have seen organisations use governance as a brake pedal. My approach uses it as a GPS — it tells you the fastest safe route, not whether you can move at all." — Matthew Bulat, CAIO

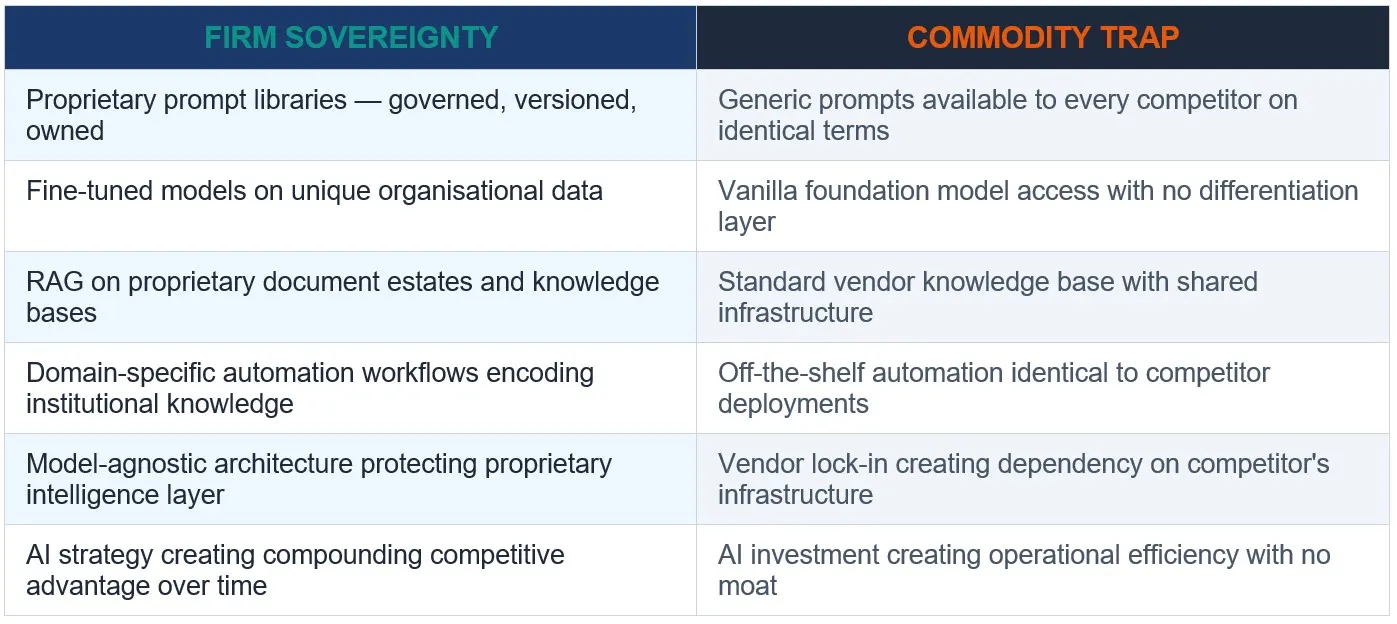

Firm Sovereignty and the Commodity Trap

The final governance question for every enterprise AI programme is not compliance — it is strategic differentiation. Satya Nadella, Davos 2026: 'A company's ability to embed its tacit knowledge in models it controls.' Firm Sovereignty is the AI governance outcome that creates durable competitive advantage: AI systems built on the organisation's proprietary data, trained on its institutional knowledge, and governed in a way that ensures the intelligence remains a controlled, proprietary asset. These systems cannot be purchased by a competitor. They are built, governed, and owned.

The opposite of Firm Sovereignty is the AI Commodity Trap: deploying generic AI tools that every competitor has access to on identical terms, building on vendor-controlled infrastructure with no proprietary differentiation layer, and calling the result an AI strategy. Generic AI creates parity. It does not create advantage. Governance that is designed only to comply, without building the proprietary AI asset layer, misses the strategic value entirely.

Building the AI Moat That Cannot Be Purchased

The governance architecture's role in Firm Sovereignty is to protect and grow the proprietary AI asset layer. This means: governing access to proprietary training data with the same rigour as any IP asset; maintaining an inventory of proprietary prompt libraries, fine-tuned models, and domain-specific automation workflows; designing the technology stack to be model-agnostic so that vendor changes do not destroy the proprietary intelligence layer built on top; and ensuring that the organisational knowledge encoded in AI systems is documented, versioned, and governed as a strategic asset.

Board Reporting and Incident Response

Governance without reporting is aspiration. The quarterly AI Governance Dashboard and the Annual AI Report are the mechanisms by which the board develops genuine oversight capability — not through technical education, but through consistent exposure to the same metrics in the same format, quarter after quarter, enabling comparison and trend recognition.

The Quarterly AI Governance Dashboard

PRODUCTION COUNT:

VALUE REALISED:

COMPLIANCE STATUS:

SHADOW AI INCIDENTS:

AI ADOPTION RATE:

Total use cases live in production with full governance. Year-on-year trend. The primary leading indicator of transformation velocity — not pilot count, not project count.

Cumulative business value captured in cash actuals — cost savings, revenue lift, risk reduction. Not forecast projections. Not annualised estimates. Actuals only. J-curve position shown alongside.

EU AI Act classification status across all production systems. AU Privacy Act APP 1.7 assessment status. SOC 2 control status summary. Outstanding remediation items with named owners and deadlines.

New shadow AI instances identified in the quarter, classified by risk tier. Remediation status of prior quarter findings. Trend direction — improving or deteriorating.

Percentage of eligible workforce actively using approved AI tools weekly. Trend. Champion Network coverage. Skills certification completion by tier.

Incident Classification and Response Protocol

TIER 1 — CRITICAL:

Regulatory breach, significant personal data exposure, prohibited AI system identified, or EU AI Act Annex III non-compliance. Response within 2 hours. CAIO + Legal + CEO notified immediately. Regulatory notification within 72 hours if required.

TIER 2 — HIGH:

Shadow AI with regulated data, AI output causing material harm, or governance gate failure. Response within 24 hours. CoE Director + Governance Lead + relevant Business Unit notified.

TIER 3 — MEDIUM:

Policy non-compliance without regulatory exposure, Shadow AI without regulated data, or audit trail gap. Response within 5 business days. CoE Governance Lead notified. Remediation plan within 10 business days.

TIER 4 — LOW:

Minor process deviation, tool usage outside approved parameters with no data risk. Response within 10 business days. Champion Network escalation. Logged and monitored for recurrence.

Download the AI Governance Framework Template

The board-ready, compliance-mapped, editable DOCX template that implements this framework in your organisation. Covers EU AI Act, Australian Privacy Act, and SOC 2 governance requirements. Structured for immediate use — not a reference document, a deployment template.

AI Governance Framework Template

Enterprise AI Readiness Checklist

30-Minute AI Strategy Session

Board-ready, compliance-mapped, editable DOCX. Covers EU AI Act, AU Privacy Act, SOC 2 alignment. Risk classification matrix. Four-layer architecture template. Incident response protocol.

25 validation questions before you deploy anything. Includes the Governance dimension assessment. Instant PDF — no email required.

Direct session with Matthew Bulat. Specific, actionable next step for your governance programme. 30 minutes. No fluff.

Explore the Governance Cluster: Related Deep-Dives

The Shadow AI Problem: 93% of Enterprise ChatGPT Use Is Through Personal Accounts →

Why Banning ChatGPT Isn't Working — and What Your AI Policy Should Say Instead →

AI Governance in 2026: Why Compliance Is Your New Competitive Advantage →

From Chaos to Control: Structuring Your First AI Centre of Excellence →

Firm Sovereignty: Building an AI Moat Your Competitors Can't Copy →

The AI Commodity Trap: Why Generic AI Creates Parity, Not Competitive Advantage →

← Explore Pillar 1: Enterprise AI Transformation Playbook →

Explore Pillar 3: AI Buy vs Build Decision Framework →

About the Author

Matthew Bulat is the Founder of Expert AI Prompts and a 20+ year technology and AI strategy executive. Former CTO, Federal Government Technical Operations Manager for national infrastructure across 20 cities and 4,000 users, and 8+ year University Lecturer in IT and engineering at CQUniversity.

The governance framework in this pillar page is not theoretical — it is operational. Expert AI Prompts runs 30 industries, 1,500+ domain-specific prompts, 15 AI workflow systems, and fully automated operations with near-zero daily intervention. The compliance architecture described here is the architecture that governs that system.

Former CTO · Federal Government Technical Operations Manager | University Lecturer, CQUniversity (8+ years) | Founder, Expert AI Prompts — 30 industries, 1,500+ prompts | Inbound executive interest: AI Strategy Leader, $300K+ USD base

View Full Profile

Matthew Bulat · CAIO / AI Strategy Leader · Expert AI Prompts

[email protected] · expertaiprompts.com · 0407 320 726 · Townsville, QLD, Australia

© 2026 Expert AI Prompts. All rights reserved.