Home > Enterprise AI Solutions > Transformation Playbook

ENTERPRISE AI TRANSFORMATION · PILLAR 1 OF 3

The Enterprise AI Transformation Playbook: 5-Phase Framework to End Pilot Purgatory

Most enterprise AI programmes stall not because the technology fails — but because the organisation isn't built to absorb it. This playbook gives you the execution architecture to change that.

Why Enterprise AI Pilots Fail — The Pilot Purgatory Problem

Enterprise AI transformation is failing at scale — not because the technology does not work, but because organisations are treating it as a technology project rather than an organisational operating system change. The result is Pilot Purgatory: a state in which proof-of-concepts are approved, funded, and deployed — and then quietly shelved, extended indefinitely, or quietly declared 'promising' without ever reaching production.

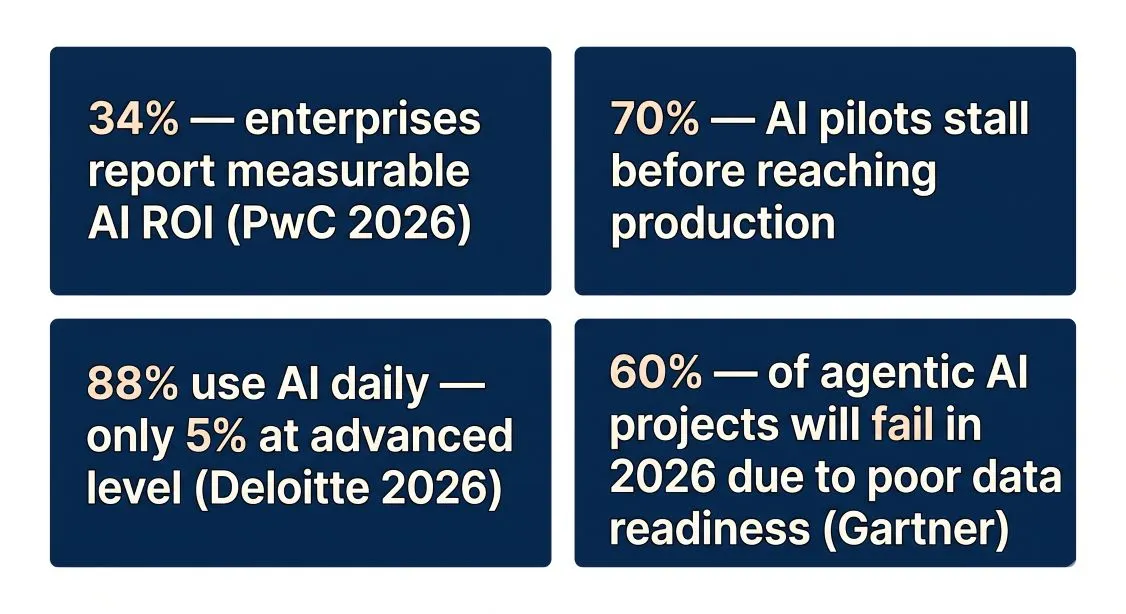

The numbers are unambiguous. Only 34% of enterprises report that their AI programmes produce measurable financial impact. 93% of enterprise ChatGPT use occurs through non-corporate accounts — employees are meeting their AI needs with public tools because the official programme is too slow or too bureaucratic. 88% of employees use AI daily, but only 5% use it in genuinely advanced ways. Activity without proficiency is noise, not transformation.

The Evidence: What Stanford's 51-Case Study Found

Stanford Digital Economy Lab, April 2026 — analysis of 51 successful enterprise AI deployments: 'Same technology. Same use cases. Vastly different outcomes. The difference was never the model — it was always the organisation: its readiness, its processes, its leadership, and its willingness to change.'

The pattern is consistent. Organisations that achieve measurable AI transformation share one characteristic that has nothing to do with their technology stack: they treat AI as an organisational operating system change, not a technology upgrade. They invest in governance before they invest in models. They assess their data before they invest in deployment. They design for 70% people-and-process change before they allocate 10% of their budget to algorithms.

Every enterprise AI transformation must establish these four pillars before the five-phase execution begins. They are prerequisites — not outputs. No phase can succeed without all four in place.

PILLAR 1 STRATEGY

PILLAR 2 DATA

PILLAR 3 TOOLING

PILLAR 4 CHANGE

Pillar 1 — Strategy: Every Initiative Ladders to a Business Outcome

Pillar 2 — Data: The Constraint That Determines Everything Else

Pillar 3 — Tooling: Model-Agnostic Architecture

Pillar 4 — Change: The 70% That Most Programmes Ignore

Every AI initiative must ladder to one of the organisation's top 3–5 strategic objectives. The firm sovereignty question must be answered before any tool is selected: What does our organisation know that no one else knows? Where does that knowledge live? How do we make AI serve it? Anti-pattern: 'Everyone gets Copilot' is not a strategy. Generic AI creates parity, not competitive advantage.

AI-ready data is not the same as good data. It must be clean, accessible, correctly permissioned, and structured for AI inference across all jurisdictions. Most enterprise data is not AI-ready. Gartner 2026: 60% of agentic AI projects will fail due to lack of AI-ready data. Data infrastructure is the binding constraint — not executive ambition.

The tooling stack must answer four questions: Does it enable non-engineers to build? Does IT retain control? Does it avoid single-vendor lock-in? Does it integrate with where work actually happens — ERP, HRIS, CRM, data warehouse? Model-agnosticism is a strategic imperative. Vendor lock-in at the model layer creates the same fragility as single-vendor cloud dependency.

BCG 10-20-70 Principle: 10% algorithms + 20% data and technology = 30% of AI success. 70% is people, processes and culture. Most AI investment targets the 30% that determines 30% of success. Signal: 93% of enterprise ChatGPT use is through personal accounts. When the official programme is too slow, employees solve their own problem. The fix is competition, not restriction.

The 5-Phase Enterprise AI Transformation Framework

Overview: From Assessment to Transformation in 36 Months

The five-phase model is not a theoretical construct — it is derived from the common patterns in successful enterprise AI transformations. No phase attempts comprehensive design before deployment. Governance is established before the first pilot; the first pilot does not wait for perfect governance. Each phase produces working outputs before the next phase begins.

Stanford, 51 Cases: 'Every successful enterprise AI deployment used an iterative approach. None used waterfall planning. Start small. Learn. Expand.'

Phase

Name

Primary Objective

Key Deliverable

Timeline

1

Assess & Align

Strategic clarity, AI maturity baseline, executive commitment, and defined success criteria before any deployment begins

AI Maturity Assessment

· Exec Sponsor Charter

· Top-5 Use Case Shortlist · Buy vs Build Decisions

Weeks 1–8

2

Govern & Prepare

Build governance framework, data foundation, CoE structure, and tooling architecture before any production deployment

AI Governance Framework

· Data Readiness Report

· CoE Charter

· Technology Stack Decision

Weeks 9–20

3

Pilot & Prove

Deploy 3–5 Wave 1 use cases with full production governance. Validate the 2× productivity gate. Prove the operating model to the board

3–5 Production Deployments

· Validated ROI

· Board Value Report

· Wave 2 Pipeline

Weeks 21–40

4

Scale & Embed

Expand to 10+ use cases across business functions. Transition AI from a programme to an operational capability owned by business functions

10+ Production Deployments

· Champion Network

· AI Skills Certification

· Wave 3 Pipeline

Months 11–24

5

Transform & Optimise

Achieve 4× speed with quality as the standard operating model. Establish Firm Sovereignty AI assets. Deliver Annual Board Report

4× Benchmark Achieved · Firm Sovereignty Assets · Annual AI Report · Year 4–6 Roadmap

Months 25–36

PHASE 1 — ASSESS AND ALIGN · Weeks 1–8

Objective: Strategic Clarity Before the First Line of Code

The most common transformation failure is skipping this phase — beginning with use case selection before the organisation understands its actual AI maturity, and before the executive sponsor has formally committed to the programme with genuine decision authority. Phase 1 is not preparation for transformation. It is the first transformation deliverable.

Score the organisation across six dimensions — Strategy, Data, Technology, Governance, Talent, and Culture — on a 1-to-4 scale. Most enterprises should not target Stage 4 immediately; research suggests 18 to 36 months to progress one stage. Set realistic ambitions based on current stage, not aspirational targets.

Key Phase 1 Deliverables

AI Maturity Assessment Report — scored across 6 dimensions, mapped to a maturity stage (Exploring / Experimenting / Scaling / Transforming)

Executive Sponsor Charter — signed by the named sponsor, defining decision authority, budget authority, conflict resolution process, and personal accountability for programme outcomes

Top-5 Use Case Shortlist — scored via the AI Use Case Prioritisation Matrix across 6 dimensions. These become the Wave 1 pilot portfolio

Preliminary Buy vs Build Decision — framework applied to the Top-5 shortlist before tooling spend is committed

Gate to Phase 2: Executive Sponsor Charter signed · AI Maturity stage confirmed · Top-5 Use Case Shortlist approved by sponsor · Buy vs Build decisions made for Wave 1

PHASE 2 — GOVERN AND PREPARE · Weeks 9–20

Objective: Build the Infrastructure That Makes Scale Possible

Phase 2 investment is front-loaded and produces no production AI deployments. This is intentional. Every dollar invested in Phase 2 reduces the cost of the next ten deployments. Governance established here — data standards, access controls, compliance mapping, CoE structure — is the shared foundation that makes Phase 3, 4, and 5 progressively cheaper and faster.

The governance framework is not a policy document. It is operational infrastructure: defining how AI use cases are approved, how models are accessed, how outputs are audited, and how regulatory compliance is evidenced. Without this infrastructure, every new use case is a governance event rather than a delivery event.

Key Phase 2 Deliverables

AI Governance Framework — risk classification system, policy set, compliance mapping to EU AI Act / AU Privacy Act / SOC 2, approved tool register

Data Readiness Report — 7-dimension assessment: availability, lineage, integration, accuracy, latency, security, governance. Clear remediation roadmap for Wave 1 use cases

AI Centre of Excellence Charter — CoE mission, scope of authority, membership, decision rights matrix, operating cadence

Technology Stack Decision — vendor selection, integration architecture, model access protocol, data pipeline design

Gate to Phase 3: Governance framework approved by legal and compliance · Data readiness confirmed for Wave 1 use cases · CoE operational with named Director · Technology stack procured and integrated

Related: AI Governance Framework — Full Guide

PHASE 3 — PILOT AND PROVE · Weeks 21–40

Objective: Validate the Operating Model in Production

Phase 3 is where the difference between a pilot and a proof becomes real. The 3–5 Wave 1 use cases selected in Phase 1 are deployed with full production governance: data pipelines live, access controls enforced, outputs audited, and a 2× productivity gate measured within 90 days of deployment. A use case that cannot demonstrate 2× productivity improvement at this stage is killed, not extended.

The Board Value Report at the end of Phase 3 is the most important communication event in the transformation programme. It presents production deployments, validated ROI figures, compliance status, and a fully costed Wave 2 proposal. Boards that receive this report understand the J-curve and commit to Phase 4 funding. Boards that don't receive it at Month 9 will redirect funding at Month 12.

Key Phase 3 Deliverables

3–5 Production Deployments — live, governed, audited, delivering measurable output (not demo or PoC environments)

Validated ROI Report — productivity gain vs. baseline, quantified in time, cost, or revenue terms. Auditable. No forecast projections — actuals only

Board Value Report — max 5 pages: production count, ROI actuals, J-curve position, compliance status, Wave 2 investment proposal

Wave 2 Pipeline — next 5–10 use cases prioritised, resourced, and approved for Phase 4 deployment

Gate to Phase 4: 2× productivity gate validated on ≥2 Wave 1 use cases · Board Value Report presented and approved · Wave 2 budget committed · Speed-to-production <60 days demonstrated

PHASE 4 — SCALE AND EMBED · Months 11–24

Objective: Transition AI From Programme to Operational Capability

Phase 4 is the operational inflection point. The AI Centre of Excellence transitions from a centralised programme owner to a hub-and-spoke model — maintaining governance standards and shared infrastructure while delegating delivery ownership to business function champions. AI capability is being embedded into the operating model, not delivered to it.

The Champion Network is the most important Phase 4 asset. Champions are identified, trained to Tier 3 certification, and deployed as the human infrastructure of the transformation. 93% of enterprise AI adoption resistance comes from employees who cannot see a pathway from their current role to an AI-augmented version of it. Champions show them the pathway through demonstrated competence, not corporate messaging.

Key Phase 4 Deliverables

10+ Use Cases in Production — across a minimum of 3 distinct business functions, each with validated ROI

Champion Network — minimum 1 Champion per major business function, each Tier 3 certified and active in monthly Champion Forum

AI Skills Certification Programme — Tier 1 (Literacy) complete organisation-wide; Tier 2 (Proficiency) underway; Tier 3 (Mastery) in all major functions

Wave 3 Pipeline — next 10–20 use cases prioritised and approved

Gate to Phase 5: AI Adoption Rate ≥40% eligible workforce · Speed-to-production <30 days · AI Proficiency Index — Tier 2 majority · 10+ production deployments live · CoE operating as hub-and-spoke

PHASE 5 — TRANSFORM AND OPTIMISE · Months 25–36

Objective: The 4× Speed With Quality Benchmark and Firm Sovereignty

Phase 5 is not a destination — it is a permanent operating standard. The target is a 4× speed with quality benchmark across all production AI deployments: the same output produced at four times the speed, with auditable quality at every stage. This benchmark is validated at Day 30, Day 90, and Day 180 of each new production deployment. It is not aspirational; it is contractual.

Firm Sovereignty is the Phase 5 strategic outcome that cannot be purchased or replicated. Satya Nadella (Davos 2026): 'A company's ability to embed its tacit knowledge in models it controls.' The AI assets built in Phases 1–4 — the prompt libraries, the workflow systems, the training data, the domain-specific models — become a competitive moat that compounds over time. Competitors can buy the same technology stack. They cannot buy your organisation's embedded institutional knowledge.

Key Phase 5 Deliverables

4× Benchmark Achieved — validated across all major production deployments with auditable quality measurement

Firm Sovereignty Assets — proprietary prompt libraries, fine-tuned domain models, internal knowledge bases, automated workflow infrastructure

Enterprise AI Annual Report — board-level accountability document: production count, ROI actuals, compliance status, J-curve position, Year 4–6 roadmap

Year 4–6 Roadmap — next 36-month transformation plan, approved by board, funded

Phase 5 is the operating standard, not an exit state. Annual review: AI Adoption Rate ≥80% eligible workforce · 3× investment return achieved · Speed-to-production <21 days · Firm Sovereignty assets inventoried and governed

The J-Curve Investment Model: Setting Board Expectations

Why Boards Pull Funding at Month 12 — and How to Prevent It

The J-Curve is the defining financial pattern of every infrastructure investment. Enterprise AI transformation is infrastructure investment. The J-curve must be presented to the board before the programme begins — not explained to them at Month 12 when the investment trough needs justifying. Boards that discover the J-curve after the fact redirect funding. Boards that understand it before programme inception remain committed through the trough.

INVESTMENT TROUGH Months 1–12

VALUE INFLECTION Months 12–18

PRODUCTIVITY ACCELERATION Months 18–36

Cumulative investment rises steeply. Value realised rises slowly from Phase 3 Wave 1 deployments. Investment exceeds value. This is the correct and expected pattern — the organisation is building infrastructure. Every dollar here reduces the cost of the next ten deployments.

The cumulative value curve intersects and begins to exceed the cumulative investment curve. Wave 1 ROI begins compounding. The programme has crossed from net investment to net return. The J-curve bottom has passed.

Compounding ROI from multiple production deployments. Marginal cost of new use cases declining as shared foundations mature. Year 3 ROI target: 3× total programme investment returned. The organisation is building a Firm Sovereignty AI moat.

Investment benchmarks: Phase 1–2 investment typically 10–15% of total programme budget on strategy and governance — before any AI deployment. Total programme budget benchmark: 3–4% of annual revenue for technology-forward industries. Data quality remediation: budget $12.9M annually per large organisation as a baseline governance cost — this is a real cost that vendor ROI slides consistently omit. (Sources: Retail AI Benchmark 2026, Informatica CDO Insights)

The BCG 10-20-70 Principle: Why 70% of Transformation Happens in People

The Shadow AI Signal — 93% of Enterprise ChatGPT Use Is Ungoverned

The BCG 10-20-70 principle defines where AI transformation actually succeeds or fails: 10% of the outcome is determined by algorithms. 20% is determined by data and technology. 70% is determined by people, processes, and cultural transformation. Most enterprise AI investment targets the 30% that determines 30% of success. The 70% — change management, skills development, incentive alignment, and cultural shift — remains consistently underfunded and under planned.

The Shadow AI signal tells the story clearly. 93% of enterprise ChatGPT use runs through personal, non-corporate accounts. This is not a policy failure — it is a change management failure. Employees have already decided that AI makes them more effective. The official programme is moving too slowly, or its tools are too restrictive, or both. The fix is not to ban personal AI use. The fix is to create a governed, capable, fast-moving programme that is more attractive than the ungoverned alternative.

The 3 Change Management Principles That Determine Phase 4 Success:

1. Make AI adoption personally beneficial and career-critical — not just organisationally mandated. The Champion Network is the delivery mechanism.

2. Lead with demonstrated capability, not training compliance. Champions show their peers what AI-augmented work looks like. That is more persuasive than any mandatory training module.

3. Design the 3-tier AI Skills Certification as a career pathway, not a compliance programme. Tier 3 Mastery should be a professional credential that is recognised, rewarded, and visible.

The AI Centre of Excellence: Your Enterprise AI Operating System

CoE Architecture: From Centralised to Federated in 36 Months

The AI Centre of Excellence is not a team — it is an organisational capability. It is the infrastructure that makes every subsequent use case cheaper, faster, and safer to deploy than the one before it. A well-designed CoE reduces speed-to-production from an average of 6 months (without shared infrastructure) to under 21 days (with it).

The CoE evolves through three operating models as the programme matures: Phase 1–2: Centralised — the CoE owns all AI programme activity, maintains all governance, and controls all access. Phase 3–4: Hub and Spoke — the CoE maintains standards and shared infrastructure; business functions own delivery within those standards. Phase 5: Federated — the CoE sets the governance framework; business functions operate with full autonomy within it.

The CoE has authority to: approve new AI tool adoption · classify use cases against the EU AI Act risk framework · publish and enforce AI governance standards · manage the enterprise AI vendor roster · operate the skills certification programme.

Key CoE Performance Metric 1: Speed-to-Production — target <60 days at Phase 3, <21 days at Phase 5

Key CoE Performance Metric 2: AI Adoption Rate — target 40% eligible workforce at Phase 3 gate, 80% at Phase 5

Key CoE Performance Metric 3: Production Count — 3–5 at Phase 3 gate, 20+ at Phase 5 standard

The Proof of Concept: Expert AI Prompts as a Live Enterprise Framework

How the Expert AI Prompts Methodology Maps to Enterprise Deployment

The principles in this playbook are not theoretical. Every framework described here — phased deployment, governance before scale, model-agnostic architecture, workforce transformation, compounding automation — is operational in a live system running today. Expert AI Prompts is the working demonstration: 30 digital products across 30 industries, 1,500+ domain-specific prompts, 15 integrated AI workflow systems, and fully automated operations built without developer teams, VC capital, or headcount scaling.

The methodology is not waiting to be proven at enterprise scale. It is proven. The enterprise deployment architecture simply adds the governance layers, CoE infrastructure, and regulated access controls that large-scale, multi-function, regulated organisations require. The core principle remains the same: build AI systems that encode your organisation's unique intelligence, and they become competitive assets that compound over time rather than costs that depreciate.

Dimension

Expert AI Prompts (Validated)

Enterprise Equivalent

Scale

30 industries, 30 product contexts

Enterprise CoE scope across business functions

Prompts

1,500+ domain-specific prompts

Enterprise prompt library — governed, versioned, reusable

Workflows

15 integrated AI workflow systems

Wave 1–3 use case portfolio in production

Operations

Near-zero daily intervention within 60 days

Phase 5 autonomous operations benchmark

Speed

4× speed with quality — validated in production

Phase 5 production target

Evidence

Inbound $300K+ AI Strategy Leader role enquiry

Live market validation of enterprise-grade methodology

"Proof beats theory. A live, revenue-generating AI system is the most credible signal an AI Strategy Leader can bring to an executive interview or board briefing." — Matthew Bulat, Expert AI Prompts

Start Your Transformation: Free Enterprise AI Resources

Book a 30-Minute AI Strategy Session

Enterprise AI Readiness Checklist 25 validation questions before you deploy anything. Instant PDF download — no email required.

AI Governance Framework Template Board-ready, compliance-mapped, editable DOCX. Covers EU AI Act, AU Privacy Act, SOC 2 alignment.

30-Minute AI Strategy Session A direct session with Matthew Bulat — specific next step for your programme within 48 hours.

Explore the Transformation Cluster: Related Deep-Dives

Enterprise AI Transformation Roadmap 2026: The 90-Day Onboarding Framework →

Matthew Bulat — CAIO · AI Strategy Leader · Enterprise Technology Executive

Matthew Bulat is the Founder of Expert AI Prompts and a 20+ year technology and AI strategy executive. His career spans CTO, national-scale Technical Operations Manager for Federal Government infrastructure across 20 cities, 3 time zones and 4,000 users, and 8+ years as University Lecturer in IT and engineering at CQUniversity.

Expert AI Prompts — the live operating demonstration of this playbook — runs 30 industries, 1,500+ domain-specific prompts, and 15 AI workflow systems with near-zero daily intervention. The frameworks in this playbook are derived from and validated by that working system.

→ Former CTO · Technical Operations Manager, Federal Government

→ University Lecturer, IT & Engineering — CQUniversity (8+ years)

→ Founder, Expert AI Prompts — 30 industries · 1,500+ prompts · 15 AI workflows

→ Inbound interest: AI Strategy Leader role, $300K+ USD base

View Full Profile →

[email protected] · expertaiprompts.com · Townsville, Queensland, Australia

Matthew Bulat · CAIO / AI Strategy Leader · Expert AI Prompts

[email protected] · expertaiprompts.com · 0407 320 726 · Townsville, QLD, Australia

© 2026 Expert AI Prompts. All rights reserved.