AI BUY VS BUILD FRAMEWORK

AI Buy vs Build Decision Framework:

Four Paths, Seven Factors, One Decision

By Matthew Bulat · April 2026 · 15-min read · Pillar 3

Home > Enterprise AI Solutions > AI Buy vs Build Framework

67% vs 22% -- Purchased AI success rate vs internal builds (MIT NANDA 2025)

42% -- S&P Global 2025: enterprise companies that scrapped the majority of AI initiatives

4 Paths

-- Buy / Build / Partner / Hybrid

7 Factors

-- weighted decision matrix

The buy vs build decision is the single most consequential strategic choice an enterprise makes before any AI initiative begins. Most organisations make it badly -- not because they lack intelligence, but because they treat a four-path decision as a binary one, compare Year 1 costs instead of three-year total cost of ownership, and discover vendor lock-in after the contract is signed. The consequences compound. S&P Global data: 42% of companies scrapped the majority of their AI initiatives in 2025 -- up sharply from 17% the year prior.

Section 2 - Why Most AI Investment Decisions Are Made Badly

The buy vs build decision fails consistently in the same three ways. The result is a binary decision (build or buy) applied to what is structurally a four-path choice; a Year 1 cost comparison applied to what is structurally a three-year TCO problem; and a technology decision applied to what is structurally a strategy decision with technology consequences.

MIT NANDA 2025 research finds that purchased AI solutions succeed approximately 67% of the time versus only 22% for internal builds. These are not technology failure rates. They are strategy failure rates -- the decision that precedes the technology determines the outcome far more than the technology itself. The 22% build success rate is not a failure of engineering. It is a failure of decision quality.

The Three Most Common Decision Failures

YEAR 1 COST ANCHORING: Build looks cheap because engineers exist on payroll and their time appears free. Buy looks expensive because the licence cost is the only number on the page. The decision that looks obvious in Year 1 often looks different in Year 3, when engineering maintenance, vendor price changes, and integration complexity are all fully visible.

BUILD-TO-LEARN CONFUSION: A prototype built for learning becomes a production system by default -- no explicit decision is ever made to productionise it. It just keeps being used, without SLAs, governance, or a named owner. The prototype accumulates users and business dependency until migration is prohibitively expensive. Label every build initiative explicitly: Build-to-Learn or Build-to-Run. They require different governance, documentation, and success criteria.

LOCK-IN DISCOVERED POST-CONTRACT: Vendor lock-in risk is not assessed until migration is needed. By then, switching cost is high, leverage is zero, and the vendor knows it. Lock-in risk is a scored criterion in the seven-factor framework -- not an afterthought assessed after go-live when the leverage is already gone.

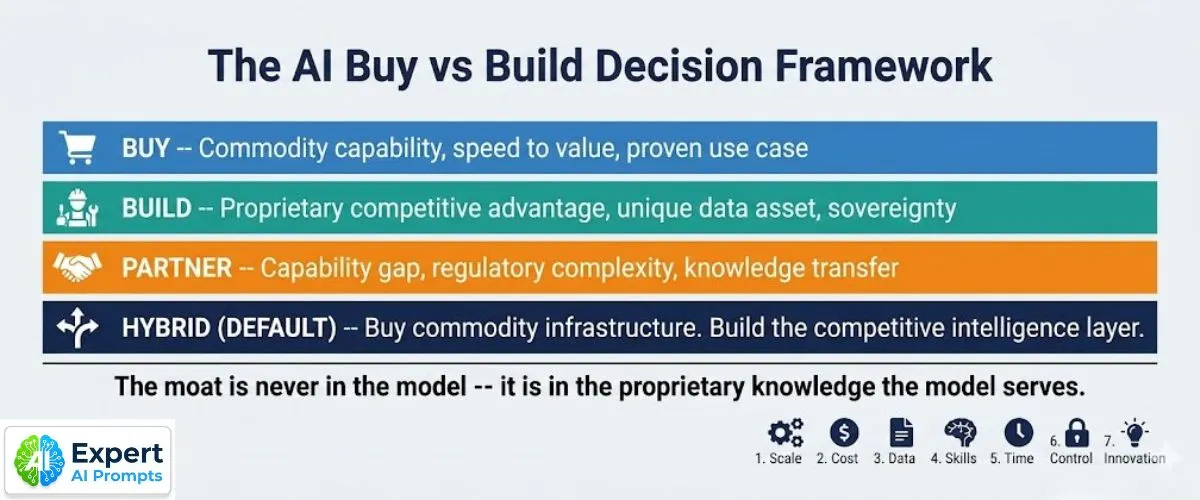

Section 3 - The Four Paths: Buy, Build, Partner, Hybrid

The buy vs build decision is not binary. It is a four-path decision -- and the correct path is almost never the same for every use case in the same organisation. The framework evaluates all four paths for every initiative, using the seven-factor matrix to identify which path scores highest for the specific use case in the specific organisational context.

Path

When to choose

Risk profile

Examples

Path 1: Buy (Vendor SaaS or API)

Commodity capability not a competitive differentiator. Proven use case with documented implementations. Speed to production is critical (under 60 days). Internal AI engineering capacity unavailable or committed elsewhere.

Vendor dependency. Data handling obligations under customer's terms. Pricing changes at scale. Exit cost and lock-in accumulation. Governance evidence that survives contract termination must be contractually required.

Foundation model APIs (OpenAI, Anthropic, Google). Meeting summarisation. Email drafting assistants. Standard customer support chatbots. Document OCR. Spam filters. CRM AI features.

Path 2: Build (Custom Internal)

Proprietary competitive advantage that encodes unique organisational intelligence. Unique data asset that cannot leave the organisation. Security or sovereignty constraint with no compliant vendor. Long-term TCO favours build at scale.

High engineering cost. Long time-to-value (typically 12-24 months for production). Talent dependency. Maintenance overhead. Risk of technical debt if specification changes post-build.

Firm Sovereignty AI systems. Proprietary knowledge graphs. Custom models trained on unique operational data. AI embedded in patented product features. RAG on proprietary document estates.

Path 3: Partner (System Integrator or Co-Development)

Capability gap in-house that cannot be filled at the required timeline. Regulatory complexity requiring specialist expertise. Technology strategy still forming and commitment to specific architecture is premature.

IP ownership ambiguity -- must be contractually specified upfront. Knowledge transfer risk: the capability should move in-house by Month 6-12. Cost escalation and quality assurance overhead.

Industry-specific AI for legal, medical, or financial compliance. EU AI Act technical documentation. Custom MLOps platform build. Data governance infrastructure. Change management programme delivery.

Path 4: Hybrid (Buy Infrastructure + Build Intelligence) -- The Default

Most enterprise AI deployments are correctly Hybrid: purchase commodity AI infrastructure from the best available vendor, then build the proprietary intelligence layer on top. The principle: buy what every competitor can buy. Build what only your organisation can build. The competitive moat is never in the model -- it is in the proprietary knowledge the model serves.

LLM API access (OpenAI, Anthropic, Google APIs). Vector database and orchestration platform. MLOps tooling. Standard cloud AI infrastructure.

Governed proprietary prompt library -- domain-specific, version-controlled, CoE-owned. Fine-tuned models trained on unique organisational data. RAG on proprietary document estate. Custom evaluation framework. Governance audit trail.

Core Principle: Buy commodity infrastructure. Build the competitive intelligence layer. The moat is never in the model -- it is in the proprietary knowledge the model serves. A competitor can buy the same foundation model API on the same terms tomorrow. They cannot buy your organisation's 20 years of domain knowledge encoded in a governed prompt library.

Section 4 - The Seven-Factor Decision Matrix

The seven-factor matrix replaces the binary build-or-buy question with a structured evaluation that scores all four paths against the specific strategic context of each use case. Apply it as a weighted scoring exercise: weight each factor (1 to 3) based on your organisational context, score each path (1 to 5) against each factor, multiply and sum. The highest weighted total indicates the recommended path.

#

Factor

Decision Question

Path Guidance

1

Competitive Differentiation

Does this AI capability create durable competitive advantage? Can a competitor replicate this with the same vendor tools within 12 months?

HIGH DIFFERENTIATION: Build. LOW (commodity): Buy. Unique data asset + differentiation: Build or Hybrid.

2

Data Sovereignty

Can this use case's data leave the organisation? Are there regulatory, security, or competitive reasons it must remain in-house?

DATA SOVEREIGNTY REQUIRED: Build or on-premises Partner. NO CONSTRAINT: Buy or Hybrid with contractual data protections.

3

Time to Value

What is the production timeline requirement? Critical business need now, or strategic programme over 12-24 months?

60-90 DAY NEED: Buy strongly preferred. 12-24 MONTH HORIZON: Build eligible. Speed and quality both required: Partner.

4

Internal AI Capability

Is AI engineering capacity available without cannibalising core product development? Is the capability available or must it be built?

STRONG CAPACITY AVAILABLE: Build. LIMITED CAPACITY: Buy or Partner. BUILDING CAPABILITY: Partner with explicit knowledge transfer milestones.

5

3-Year Total Cost of Ownership

What is the full 3-year cost across all components: licensing, engineering, infrastructure, governance, maintenance, talent, and exit cost?

Run the full TCO model (see Section 6). Build typically underestimates. Buy typically underestimates scale-up costs. Never compare Year 1 only.

6

Vendor Lock-In Risk

What is the exit cost if the vendor fails, is acquired, changes pricing, or discontinues the product? Can data and models be exported cleanly?

HIGH LOCK-IN: Build or open-standard Buy. MODERATE: Buy with contractual portability clauses. LOW: Buy with standard contract protections.

7

Regulatory & Compliance Fit

Does the vendor satisfy SOC 2 Type II, EU AI Act technical documentation, data residency, and AU Privacy Act APP 1.7 obligations?

COMPLIANCE GAP: Build or certified Partner. COMPLIANT VENDOR: Buy with obligations contractually defined and auditable. PARTIAL FIT: Hybrid.

How to Apply the Weighted Scoring Process

Step 1

Weight each factor 1 to 3 based on your organisation's strategic context. A regulated enterprise weights Data Sovereignty and Compliance at 3. A consumer tech company may weight Time to Value at 3. Document the weighting rationale before scoring.

Step 2

Score each of the four paths -- Build, Buy, Partner, Hybrid -- against each factor on a scale of 1 (poor fit) to 5 (strong fit). Score honestly, not aspirationally.

Step 3

Multiply each score by its factor weight. Sum the weighted scores for each path.

Step 4

The highest total weighted score indicates the recommended path. Review the result for coherence against the qualitative judgement of those closest to the use case.

Step 5 (critical)

Apply the critical failure override: if any factor scores 1 for a given path, that path is eliminated regardless of its total score. A score of 1 on Data Sovereignty means the path would violate a non-negotiable constraint. The framework guides the decision; it does not override common sense.

Download

Download the Buy vs Build Decision Scorecard for the full weighted scoring template with all four paths across all seven factors.

Section 5 - The 3C Quick-Triage Model

For decisions where a full seven-factor assessment is not required, the 3C Quick-Triage produces a directional recommendation in under five minutes.

CAPABILITY: Does the organisation have the internal talent to build and maintain this without compromising other priorities? YES = Build eligible. NO = Buy or Partner.

COMPLEXITY: Is this a standard use case (commodity AI will solve it) or a proprietary use case (only a custom solution produces the required behaviour)? STANDARD = Buy. PROPRIETARY = Build or Partner.

CRITICALITY: How central is this to competitive advantage? CORE COMPETITIVE MOAT = Build. OPERATIONAL NECESSITY = Buy. STRATEGIC CAPABILITY BUILDING = Partner.

Research signal: 'Rate every AI initiative on three axes: Capability, Complexity, and Criticality. The intersection determines the optimal path.' (TechAhead, 2026)

Note: The 3C model produces a directional answer. For initiatives over $500K total investment or with high lock-in risk, run the full seven-factor scoring process.

Section 6 - The 3-Year Total Cost of Ownership Model

The most common financial error in AI investment decisions is comparing Year 1 costs across paths. Year 1 Buy costs are front-loaded with licensing fees. Year 1 Build costs are understated because engineering time appears free. By Year 3, both patterns have usually reversed -- but the decision has already been made based on Year 1 data.

Rule: The TCO comparison must run across all three years, include all cost categories, and model the scale-up cost explicitly for Buy options, since vendor pricing at enterprise scale is rarely the same as at pilot scale.

The Cost Categories Most Organisations Miss

Missing Cost 1

Engineering maintenance and drift remediation: AI models degrade over time as data distributions shift. The cost of maintaining production accuracy -- retraining, prompt recalibration, edge case handling -- is absent from most Build business cases. Budget 15-25% of initial build cost per year for ongoing maintenance.

Missing Cost 2

Governance infrastructure overhead: Every production AI system requires audit trails, monitoring tooling, drift detection, and compliance evidence. This is a real operational cost, not a one-time investment. EU AI Act obligations for high-risk systems require annual conformity review.

Missing Cost 3

Scale-up pricing for Buy options: Most vendor pricing is negotiated at pilot or initial deployment volume. Enterprise scale pricing for the same platform is often 200-400% of the initial rate. Model the scale-up pricing explicitly, with a vendor pricing change scenario at 150% of current rate.

Missing Cost 4

Exit cost: The cost of migrating from a Build or Buy path if it fails at Year 2 is almost never included in the initial TCO. It should be. For Build paths, this is the write-off cost of the engineering investment. For Buy paths, it is the data migration, retraining, and business disruption cost.

Missing Cost 5

Data quality remediation: Gartner 2026 benchmark -- $12.9M annually per large enterprise for data governance and quality maintenance for AI workloads. This is a real operational cost that is absent from most AI business cases.

Section 7 - The Five Vendor Lock-In Layers

Vendor lock-in at the AI layer does not operate at a single point in the stack. It operates simultaneously at five layers, and each layer accumulates independently. An organisation that has managed lock-in at the model layer may still be fully locked in at the data and governance evidence layers.

Layer 1

MODEL LOCK-IN: AI outputs tightly coupled to a single model's specific behaviour. When the model is updated, deprecated, or repriced, outputs change and cost structures change with no alternative. Mitigation: model-agnostic abstraction layer so the proprietary intelligence layer can be served by multiple model providers.

Layer 2

ORCHESTRATION LOCK-IN: Workflow automation built on a single vendor's proprietary orchestration framework, with workflow formats that cannot be migrated. Mitigation: open-standard orchestration frameworks (MCP-standard or equivalent) where workflow logic is separable from the execution platform.

Layer 3

DATA LOCK-IN: Training data, fine-tuning datasets, and RAG indices stored in vendor-controlled infrastructure with no export mechanism. Mitigation: contractual data portability clause (all data exportable in portable format within 48 hours of contract termination) and regular local backups.

Layer 4

GOVERNANCE EVIDENCE LOCK-IN: Audit trails, model documentation, and compliance evidence stored in vendor systems that may not be accessible after contract termination. Mitigation: EU AI Act Annex IV documentation maintained in organisation-controlled systems; Regulatory Access clause in every vendor contract.

Layer 5

ORGANISATIONAL KNOWLEDGE LOCK-IN: Configuration knowledge, optimisation techniques, and undocumented behaviour patterns held by a small team -- who would leave with the vendor relationship. Mitigation: systematic documentation of all proprietary configuration as an organisational asset, not individual expertise.

The 10-Question Vendor Assessment Checklist

Q1 Who owns the fine-tuned model weights produced by training on our proprietary data on your infrastructure? (Required answer: the organisation.)

Q2 How do we export all data -- training data, fine-tuning datasets, RAG indices -- on 48-hour notice? In what format?

Q3 What audit trail and compliance documentation do we have access to after contract termination?

Q4 What is your current pricing at our current usage volume? At 5x, 10x, and 50x current volume?

Q6 What is your SLA for API availability? Historical uptime? Compensation for SLA breaches?

Q7 Can you provide two reference customers who have migrated away from your platform and can speak to the exit experience?

Q8 What is your data residency and data processing location? Can it be restricted to AU or EU data zones?

Q9 What happens to our data in the event of your insolvency, acquisition, or product discontinuation?

Q10 What is the process for requesting all system logs, model documentation, and audit trails required for EU AI Act Annex IV compliance?

Section 8 - AI Decision by Stack Layer

Every enterprise AI programme involves multiple stack layers. The buy vs build decision is not made once -- it is made separately for each layer. The default recommendation by layer is below.

Stack Layer

Recommended Default

Exception / Condition

Foundation Model

BUY

Exception: Training from scratch is viable only for organisations operating at hyperscale with unique proprietary datasets. For all other enterprises, training from scratch is not commercially viable.

Cloud Infrastructure

BUY

Exception: scale requirements exceed commercial cloud economics -- extremely rare in enterprise AI.

Base AI Tooling

BUY or OPEN-SOURCE

Exception: specific requirements are not served by any available solution. Validate rigorously before committing to a build -- vendor options expand monthly.

Integration & Orchestration

HYBRID

Buy the connectivity infrastructure (MCP-standard). Build the workflow logic, business rules, and process encoding that are uniquely yours.

AI Application Layer

DEPENDS

BUY for commodity use cases. BUILD for use cases where AI behaviour encodes your competitive moat. Apply the 3C Quick-Triage first.

Competitive Differentiator Layer

BUILD. No exception.

If this is your competitive moat, buying a solution your competitors can also purchase does not create advantage. It creates parity.

Section 9 - The Framework in Action: Three Worked Examples

The seven-factor framework produces different verdicts for different use cases. The three examples below demonstrate the framework applied to real enterprise AI scenarios. The framework reaches different conclusions for each -- demonstrating that it is a decision tool, not advocacy for a predetermined path.

Example 1 -- Customer Support Triage AI (Verdict: Buy)

Scenario: A 5,000-employee professional services firm deploying AI for first-line customer support triage -- routing enquiries, answering FAQs, escalating complex cases to human agents. Needed operational within 60 days. Engineering team of 8 fully committed to core product development.

Seven-Factor Result: Factor 1 (Competitive Differentiation): Low -- customer support triage is commodity. Buy. Factor 2 (Data Sovereignty): No constraint. Factor 3 (Time to Value): 60-day need. Strongly Buy. Factor 4 (Internal Capability): Team committed elsewhere. Buy. Factor 5 (TCO): Buy favours by approximately 40% over 3 years. Factor 6 (Lock-In): Moderate -- negotiate portability clauses. Factor 7 (Compliance): Vendor is SOC 2 compliant.

Verdict: BUY. Select a vendor platform with contractual data portability rights, SOC 2 Type II verification, and mandatory 12-month pricing lock. Do not attempt to build this use case -- the engineering investment creates no competitive moat and delays production by 12+ months.

Example 2 -- Proprietary Credit Risk Model (Verdict: Build)

Scenario: A fintech company replacing an incumbent credit scoring model with a proprietary AI model trained on 10 years of in-house transaction data. The model encodes unique risk signals not available to any competitor or vendor. Regulatory requirement for full model explainability. Long development timeline acceptable.

Seven-Factor Result: Factor 1 (Competitive Differentiation): Maximum -- encodes unique proprietary data and risk intelligence. Build. Factor 2 (Data Sovereignty): Cannot leave the organisation. Build. Factor 3 (Time to Value): 18-month timeline acceptable. Build eligible. Factor 4 (Internal Capability): Dedicated ML engineering team available. Factor 5 (TCO): Build favours when proprietary performance advantage is monetised at scale. Factor 6 (Lock-In): No vendor dependency. Factor 7 (Compliance): Full explainability requirement eliminates black-box vendor options.

Verdict: BUILD. This is a textbook Firm Sovereignty AI system: unique proprietary data, maximum competitive differentiation, regulatory requirement for explainability, adequate internal capability. No vendor can produce this capability. The build cost is justified by the moat it creates.

Example 3 -- Enterprise Knowledge Management AI (Verdict: Hybrid)

Scenario: A global consulting firm building an AI knowledge management system -- surfacing relevant client case studies, methodologies, and expert knowledge for consultants during engagement delivery. Large proprietary document estate. Needs global deployment. Partnership with third party for initial build.

Seven-Factor Result: Factor 1: High differentiation (proprietary document estate). Factor 2: Some constraint (client confidentiality). Factor 3: Medium urgency (6-month timeline). Factor 4: Limited ML team -- partner required for initial build. Factor 5: Hybrid TCO comparable to pure Buy at scale, with differentiation advantage. Factor 6: Moderate lock-in risk if document estate locked to vendor RAG. Factor 7: SOC 2 required, data residency constraint.

Verdict: HYBRID. Buy the foundation model API (Anthropic Claude or equivalent) and the vector database infrastructure (Pinecone or equivalent). Build the proprietary knowledge graph, document classification logic, and client-facing retrieval interface. Partner for initial deployment with explicit capability transfer to internal team by Month 6. Hybrid delivers the competitive differentiation of a pure Build with the speed of a Buy.

Section 10 - The Decision Authority Matrix

Apply the following decision authority structure to all AI investment decisions. These thresholds are the minimum viable governance standard.

Decision Type

Required Authority

Threshold

Buy -- under $500K annual contract

CAIO + CFO approval

$500K annual

Buy -- over $500K annual contract

CAIO + CFO + CEO approval

Over $500K annual

Build -- under $1M investment

CAIO + CFO + AI Governance Committee

$1M investment

Build -- over $1M investment

Full C-Suite + Board approval

Over $1M investment

Partner -- any value

CAIO + Legal + CFO. IP ownership clause mandatory.

All Partner engagements

Hybrid -- split authority

Buy layer: CAIO. Build layer: C-Suite review.

Per component threshold

Section 11 - Three Mandatory Contract Clauses

These three contract clauses are non-negotiable for every AI vendor contract. They protect the organisation's governance position, proprietary IP, and regulatory compliance evidence regardless of what happens to the vendor relationship. Any vendor that refuses to include these clauses has disclosed their lock-in intention.

DATA PORTABILITY: All data -- training data, fine-tuning datasets, RAG indices, system outputs, audit logs -- is exportable in a portable, standard format within 48 hours of a written request, including upon contract termination. No vendor proprietary format lock. Data is the organisation's property, not the vendor's.

IP OWNERSHIP: All model weights, fine-tuned models, and custom evaluation datasets produced by training on the organisation's proprietary data are the organisation's intellectual property -- not the vendor's. This clause survives contract termination. The vendor has no licensing rights over outputs produced from the organisation's data.

REGULATORY ACCESS: The organisation retains full access to all system logs, model documentation, audit trails, and technical documentation required for EU AI Act compliance and SOC 2 audits. This access is provided in full within 48 hours of request, at no additional cost, and survives contract termination for a period of not less than 7 years.

Section 12 - Download the Buy vs Build Decision Scorecard

The Buy vs Build Decision Scorecard is the practical implementation of the framework in this page: a weighted seven-factor scoring template across all four paths, the 3C Quick-Triage matrix, the 3-Year TCO comparison model, the Vendor Lock-In Assessment Checklist, and the three mandatory contract clauses. Excel format. No watermarks. Yours to use and adapt.

Buy vs Build Decision Scorecard (Excel/PDF -- Email Required). Download the Scorecard.

Book a 30-Minute AI Strategy Session

Matthew Bulat -- CAIO / AI Strategy Leader / Founder, Expert AI Prompts

Matthew Bulat is the Founder of Expert AI Prompts and a 20+ year technology and AI strategy executive. Former CTO, Federal Government Technical Operations Manager for national infrastructure across 20 cities and 4,000 users, and 8+ year University Lecturer at CQUniversity. The Buy vs Build Decision Framework in this page is the same framework applied in the Expert AI Prompts live platform architecture -- 30 industries, 1,500+ prompts, 15 AI workflow systems -- and in the Gap Asset Portfolio presented to senior executive hiring panels.

Former CTO · Federal Government Technical Operations Manager · CQUniversity Lecturer (8+ years) · MACS CP · M.Eng.Tech

View Full Profile